the unstuck machine (save this)

the 6-step recovery system for when openclaw doctor, logs, and status still don’t tell you what actually broke

most that i chat with privately here aren’t getting stuck in openclaw because the model is too weak.

they get stuck because their recovery model is weak or doesn’t even exist.

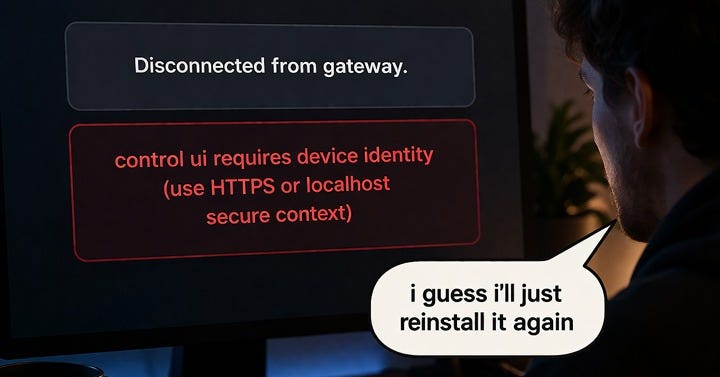

something breaks. they run doctor. they restart the gateway. they maybe blame the provider. then they lose an hour chasing the wrong surface.

that’s a huge trap

openclaw already gives you a better first ladder than most people use. the docs point you to openclaw status, openclaw status --all, openclaw gateway probe, openclaw gateway status, openclaw doctor, openclaw channels status --probe, and openclaw logs --follow as the first 60 seconds. that’s the clue. the real failure is often smaller and more boring than you’d expect. transport. policy. auth. workspace drift. not “the whole system is broken.”

if you’re reading this, you’re probably running openclaw on something real or trying to. a local box, a vps, a pi, a hetzner rig, something hybrid, something you’ve already sunk time into. and the same pressure points keep showing up: reliability, memory, setup confusion, security, environment fragmentation.

this is for when it breaks and you need to know where.

what doctor is, and what doctor isn’t

doctor matters.

it can catch real config and service problems, recommend repairs, and even apply safe fixes or deeper repair flows when needed. but it’s still one sensor inside the recovery model, not the whole recovery model. the docs are clear that doctor supports safe migrations, repair paths, and deeper scans, not that it replaces judgment.

and that distinction matters more than it sounds, because of three things most people discover the hard way.

auth isn’t one surface. credentials aren’t shared automatically across agents. each agent has its own auth profile under its own directory. so when one agent works and another fails, the easy explanation is “openclaw is inconsistent.” the real explanation is usually simpler: you’re not looking at one auth surface. you’re looking at several.

memory isn’t a feature. it’s files. openclaw memory is plain markdown in the agent workspace. the files are the source of truth. if the wrong workspace is active, or memory isn’t getting written to disk, memory will feel broken even when the feature is working correctly. the failure is usually in the workspace path or write layer, not the memory system itself.

the gateway assumes one trusted operator boundary. the security docs are blunt about this. it’s not designed for hostile multi-tenant operation where adversarial users share one agent or gateway. if you need mixed-trust operation, you split trust boundaries with separate gateways and ideally separate os users or hosts. that one design truth explains a lot of “weird” behavior people file under instability. sometimes the issue isn’t the feature. it’s the boundary.

when a stack starts feeling fragile, the problem usually isn’t “openclaw is broken.”

it’s that you don’t know which layer failed yet.

that’s the real skill gap.

the free version first

if you want the lighter version first, start here.

this is the free unstuck machine. open it in chatgpt, or copy the prompt into your llm of choice and run it there.

the five-minute model

start with the real gateway host.

not another laptop. not another terminal. not the machine you wish was the source of truth.

run the base ladder first.

openclaw status

openclaw status --all

openclaw gateway probe

openclaw gateway status

openclaw doctor

openclaw channels status --probe

openclaw logs --followif the break smells like auth or routing: the observable signal is a gateway that responds but model calls return 401, hang on auth, or fail silently on a second agent while the first runs fine. add openclaw models status. if auth still looks ambiguous, escalate to openclaw models status --probe. that one’s a live probe, so it’s a sharper tool, not the default one.

if the break smells like memory: the observable signal is agent responses that feel stale, wrong-context, or disconnected from recent writes, especially after a workspace change or agent swap. add openclaw memory status --deep. check that the right workspace is active and that memory is writing to disk before you look anywhere else.

if the break is “bot is online but nobody gets replies”: the observable signal is a clean dashboard with no delivery errors but zero responses reaching users. add the pairing and channel-config checks before you start blaming the model. a channel can be connected and still fail because pairing is pending, allowlists are wrong, mention gating is blocking, or channel policy is tighter than you think. each of those is a different fix.

one rule makes all of this easier to remember.

never trust narration.

trust the smallest piece of evidence that proves where the break really lives.

one operator example

take the ml platform engineer building a behavior-aware engineering copilot with context aggregation, secure action execution, and memory across sessions.

that setup touches every layer this ladder covers. memory has to write correctly across agent sessions. auth has to be clean per-agent or the copilot silently loses access to tools mid-session. the trust boundary has to be set correctly or tool execution behaves unpredictably in multi-agent configs.

when that stack breaks, “it stopped working” isn’t a diagnosis.

“the memory branch shows workspace drift after the last agent restart” is a diagnosis. that routes to a specific fix. that’s the difference between five minutes and an hour.

you’re not here to guess. you’re here to know.

what the paid version actually unlocks

the paid version isn’t more commentary.

it’s the full recovery asset.

the full unstuck machine gives you the deeper 6-step recovery flow, tighter branch logic, stronger diagnosis, exact helper-agent prompts, verification steps, and escalation logic.

it forces the right separation between symptom families.

no replies aren’t the same problem as auth drift. auth drift isn’t the same problem as memory weirdness. memory weirdness isn’t the same problem as a tools or browser failure.

it forces evidence capture before diagnosis.

that sounds obvious.

it isn’t.

this is where most people lose the most time, and the most provider spend. every blind restart, every redundant probe, every “let me try the model again” costs real calls. a recovery system that routes you to the right surface in step one pays for itself in the second incident.

it checks environment and scope.

one agent is different from many agents. one local mac is different from a remote host. a hybrid setup creates its own kind of confusion.

whether you’re on a mac, a pi, a vps, hetzner, mixed linux, or something cloud-adjacent, environment-specific recovery isn’t optional. it’s table stakes.

it checks what changed before the break.

update. provider swap. new skill. browser mode change. permission drift.

that’s often the shortest path to the truth.

it branches into the right deep probe.

channel and dashboard problems get pushed toward pairing, allowlists, token drift, mention gating, and delivery policy.

auth problems get pushed toward per-agent auth, wrong provider names, model alias issues, and service-versus-shell mismatch.

memory problems get pushed toward workspace path, file-backed truth, retrieval readiness, and whether the right agent is even looking at the right files.

tools and browser problems get pushed toward policy, approvals, missing binaries, filtered skills, browser profile mode, and workflow logic.

and it doesn’t stop at diagnosis.

it outputs:

diagnosis

smallest proven root cause

manual recovery plan

helper-agent prompt

verification checklist

escalation point

that last part is the whole game.

because once you can say “this is where it broke, this is what healthy looks like, and this is where i stop guessing,” the stack starts feeling less magical and more usable.

more control. better odds. less time lost to narrative.

this is the full unstuck machine. open it in chatgpt, or copy the prompt into your llm of choice and use it there.

👇 the unstuck machine for our paid subscribers